What is a 'robots.txt' file?

If you've ever clicked around a website and stumbled onto yourdomain.com/robots.txt, you probably wondered whether someone was pulling your leg. It's an unassuming little text file, but it plays a real role in how search engines and crawlers interact with your site.

Let's break down what it does and when you should care.

What is robots.txt, exactly?

robots.txt is a plain text file in the root directory of your website (so yourdomain.com/robots.txt). It tells web crawlers, like Googlebot, Bingbot, and other automated tools, what they can and can't access.

In other words: it's your website's way of saying "Hey robots, here's what I'd like you to do (or not do)."

It's a convention, not a lock. Well-behaved crawlers follow it. Malicious ones don't. More on that further down.

Why do websites use robots.txt?

A few practical reasons:

- Prevent duplicate content from being crawled (print versions, filtered product listings, session-ID URLs)

- Keep sensitive paths like

/admin/,/cgi-bin/, or staging folders out of search results - Stop crawlers from wasting server resources on large downloads or heavy scripts

- Delay indexing of pages that aren't ready for the public yet

Think of it as a polite sign on the door. It's great for keeping good bots out of rooms they don't belong in, but it won't stop someone determined to break in.

What does a robots.txt file look like?

Plain text, no HTML, no fancy formatting, just rules. A super basic example:

User-agent: * Disallow: /private/

This tells every crawler to stay out of /private/. A more targeted version:

User-agent: Googlebot Disallow: /drafts/

This only blocks Google from your drafts folder. Letting everyone crawl everything is the easiest of all:

User-agent: * Disallow:

An empty Disallow: means "go ahead, crawl everything."

Does robots.txt do anything for security?

Here's the catch: robots.txt is a request, not an enforcement mechanism. Bots can ignore it, and malicious ones regularly do.

If there's a page you really don't want anyone seeing, an admin panel, a hidden login, an internal dashboard, protect it with authentication and proper permissions. Putting it in robots.txt alone won't keep anyone out, and it can actually draw attention to the exact paths you'd rather keep quiet.

"Here's the one place we don't want you to look" is not a great signal to send.

Common use cases

A few examples of how people use robots.txt in the real world.

Blocking staging or internal pages

Working on a redesign or testing something new? Keep the staging folder out of the index:

User-agent: * Disallow: /staging/

Preventing duplicate content

E-commerce sites often generate many URLs that are slight variations of the same page. Keep bots out of the noise:

User-agent: * Disallow: /search/ Disallow: /filter/

Allowing specific bots only

This pattern blocks everyone except Google. Handy for certain migrations or if you're debugging a crawl issue:

User-agent: * Disallow: / User-agent: Googlebot Allow: /

Does robots.txt affect SEO?

Yes, and this is probably why most people care. Used carefully, robots.txt can help your SEO. Used carelessly, it can tank it.

The good:

- Keep low-quality or duplicate pages out of the index

- Guide crawl budget on large sites (important if you have millions of URLs)

- Protect parts of your site from being hit by high-volume bot traffic

The bad:

- Accidentally blocking pages you actually want indexed

- Blocking CSS or JS files Google needs to render the page, which can hurt rankings

- Leaving

Disallowrules in place long after a section was meant to be re-indexed

If you're not sure whether a rule is safe, test it in Google Search Console's robots.txt tester before shipping it.

Where to find your own robots.txt file

Visit yourdomain.com/robots.txt. That's it.

You can also use Google Search Console's Tester tool to validate your rules and see how Googlebot interprets them.

About third-party tools and robots.txt

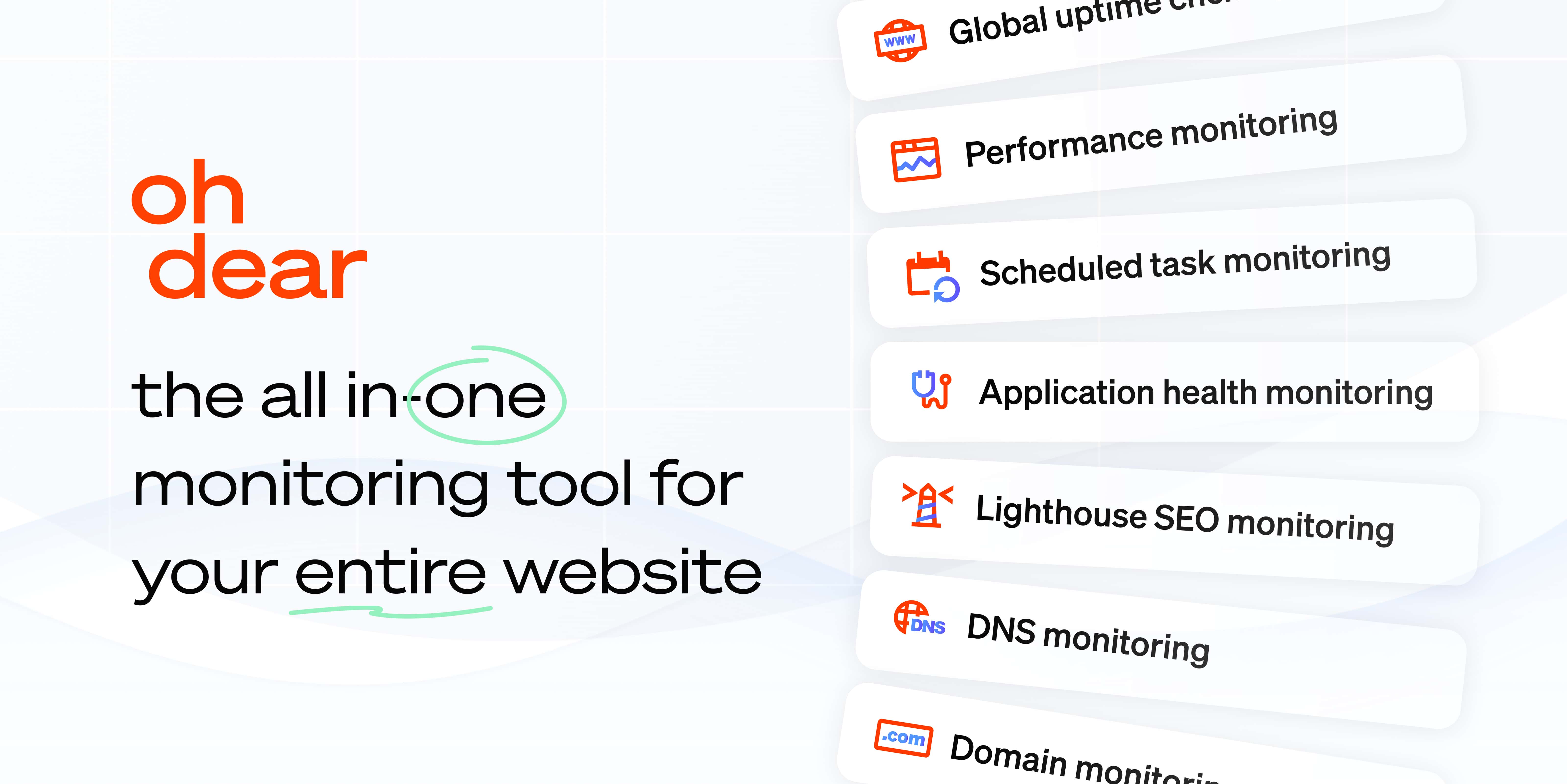

Say you're using a website monitoring tool like Oh Dear and you notice our crawler showing up in your logs. You might wonder whether you should add us to robots.txt.

Short answer: you don't need to. Oh Dear doesn't rely on your robots.txt file to function.

That said, if you want to be explicit (maybe for peace of mind, maybe because your WAF logs are louder than they should be), you can add a block for us:

User-agent: OhDear Disallow: /junk/

This tells our crawler it can visit every part of the site except /junk/. More on how we crawl, and how to whitelist us, in our FAQ entry about Oh Dear in robots.txt.

That's really all there is to it. Not flashy, but getting robots.txt right quietly improves how search engines understand your site, and how well-behaved bots treat your infrastructure.